Neural processing makes better photors

All of a sudden, everyone became a better photographer, and this is due more to machine learning and neural processing units in modern phones, rather than people mastering the skill.

The new Ethos N78 NPU is built on the success of the Ethos 77, and it is based on the second generation Scylla architecture. The design goals included increasing the AI and Machine learning performance, higher efficiency, offering extended configurability and continuation of unified software and tools path.

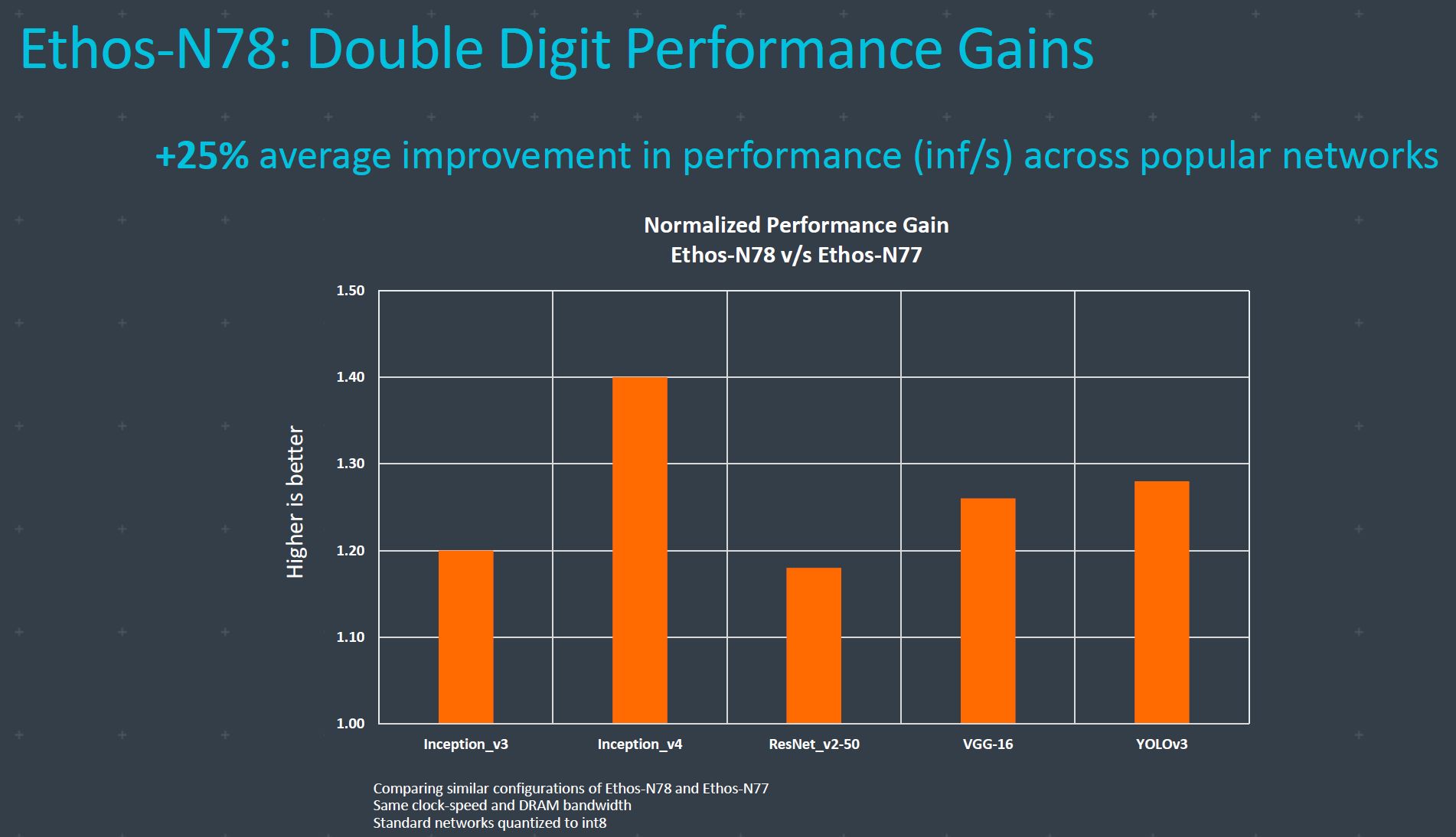

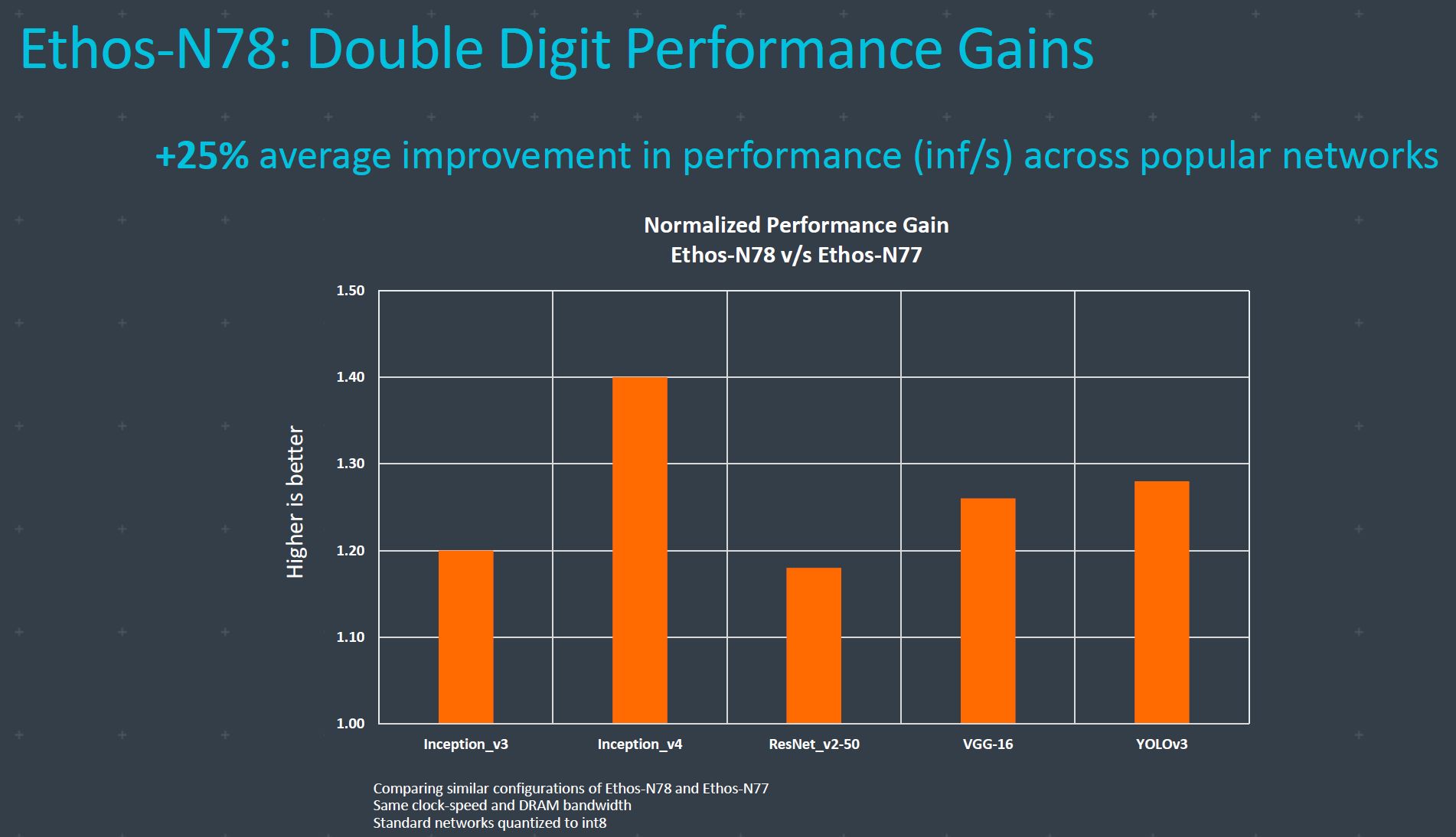

The top of the line Ethos N78 offers two times peak performance when comparing the maximum number of MACs versus Ethos-N77. Performance efficiency goes up 25 percent depending on the neural network, while the DRAM bandwidth efficiency increases by 40 percent. ARM licenses the tech and customers can use the Ethos building blocks and tune it best for the silicon space, power, or performance. The result is over 90 unique configurations, rather than just one fits all strategy.

According to Ravi Mahatme, senior product manager, Machine Learning Group at ARM, the two times peak performance comes from internal compression. Machine Learning and AI tasks are three-dimensional problem depending on compute ability, DRAM and are vector bound.

Performance

Based on the design, Ethos N78 can have between 1 and 10 TOP/s (Tera operations per second). Customers can choose to configure SRAM to fit the need for optimum DRAM bandwidth or configure vector enginers. The balance of these three creates the right performance, bandwidth, and an energy efficiencient neural processor.

Dennis Laudick, vice president of marketing, Machine Learning Group at ARM, elaborated on this by saying that customers have been asking for more capability and higher performance. This is the part where we jump in with NPU (Machine Learning and Artificial Intelligence ) making you a better photographer.

In any kind of processor, it all comes down to data processing. The most obvious task is the classification and object detection, something that helps you create better pictures. Modern phone cameras can detect people’s faces and make sure that they are in focus. It can detect food and optimize scene for Instagram.

Other important tasks are super-resolution and speech recognition. Rachel Trimble, principal engineer, Machine Learning Group at ARM, said that images are hard to process, but generating voice is an arduous computational task. Detecting anomalies in security is also a task for AI in the NPU as well as Android text prediction.

The Ethos N78 will result in a more responsive AI application, lower silicon cost, lower power for longer battery life.

Let’s talk numbers. Ethos-N78 in a similar configuration to last year’s Ethos-N77 scores 20 percent higher in Inception_v3. If you just joined the AI class, Inception_v3 is a convolutional neural network for assisting in image analysis and object detection. The Ethos-N78 scores 40 percent better in Inception_v4, slightly less than 20 percent in ResNet_v2-50, around 25 percent higher in VGG-16 (OxfordNet) and slightly less than 30 percent in YOLOv3 (Real-time object detection network).

Configurations

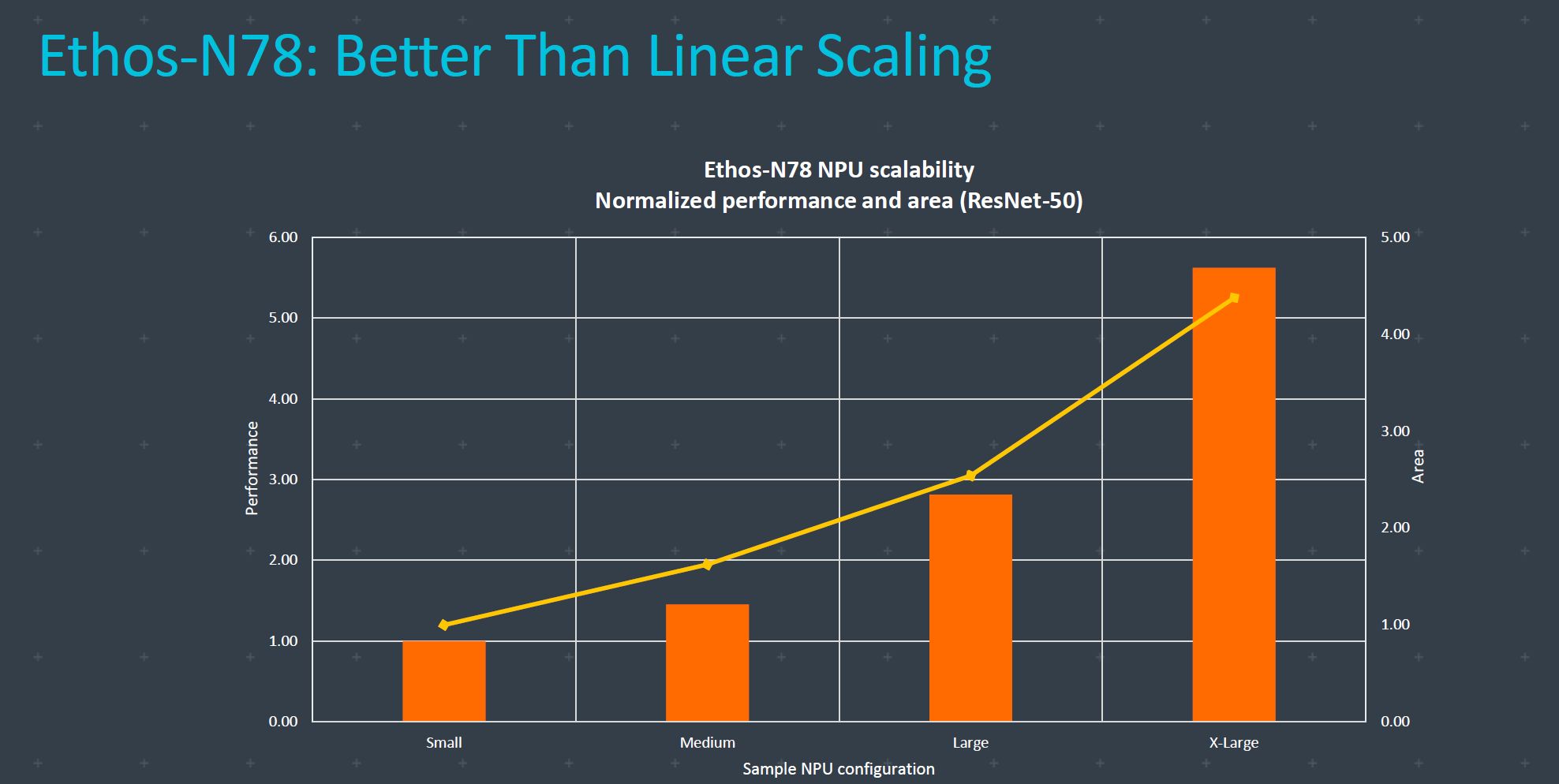

Talking to ARM, we learned that Ethos-N78 NPU scalability normalized for performance and area provides better than linear results in ResNet-50. Starting with a small NPU design as a reference point, the medium design would already provide close to 50 percent faster performance. The large design is almost three times faster than the small, while X-Large gets slightly slower than six times the small design.

We have mentioned the reduction in DRAM traffic, and ARM claims a 40 percent reduction in DRAM traffic per inference (MB/inf) across popular networks. The benchmark shows close to 30 percent less DRAM traffic in Inception_v3, around 45 percent in Inception_v4, 25 percent in ResNet-50, and more than 55 percent in YOLOv3_608x608.

Software

ARM is using write once, deploy everywhere software stack strategy. The programmer writes the ML code and support for heterogeneous deployment chooses whether the workload fits the ARM Cortex-A CPU, Mali GPU, or Ethos-N NPU.

Ethos N78 supports online interpreted compilation support for Android devices under Android NNAPI while offline (TVM) compilation support for embedded devices.

ARM developer tools help unleash ML performance insights in the ARM Development Studio that can be used for profiling and debugging across Arm IP. The new ARM Developer Tools bring enhanced performance analysis on the NN with new ML event trace visualizations in ARM Mobile Studio. It is used for application and game optimization.

Entry-level phones, smart cameras, and DTVs are usually using super-resolution scaling videos and images from 720 to 1080p or even 4K. These devices should use between one and two TOP/s for these tasks.

Mainstream phones and smart home hubs primarily use classification as well as automatic speech recognition (ASR) and usually need between two and four TOP/s for these tasks. Some categories are overlapping in use case scenarios as entry smartphone on a smart TV can use some classification.

The most demanding tasks include computational photography, premium phones, and computers would be using all of the AI abilities, including classification, object detection, super-resolution, automatic speech recognition (ASR), and segmentation. It would need between five and ten TOP/s for the task.

One can expect to see Ethos-N78 in 5nm designs in 2021 phones and laptops, but ARM did confirm that the IP can be used in 7nm or higher manufacturing processes too.